Articles & case studies

Including Data Governance as Part of your ERP Implementation

Implementing new business applications is a massive investment that requires spending significant sums defining business requirements, defining terms, configuring the solution, building RICEW objects, cleansing data, transforming data. After working on dozens of implementations, we find that organizations frequently overlook using the implementation as an opportunity to upgrade data governance to fuel long term data performance.

Upgrading data governance as part of the implementation doesn’t need to be a big lift and can be accomplished in bite-sized chunks throughout the project timeline. If an organization doesn’t consider data governance beyond the new application controls, the data will only be as good as the application was configured. This myopic approach can lead to disaster. We’re currently working with a Fortune 500 client who implemented a new HR solution without Definian’s guidance. Their implementation lacked the governance to control the data within the new system. After go-live, it quickly became impossible to get a trustworthy report from the new system in a timely manner. After several months of untangling the data and working with the business to redefine what its requirements should be, they’re finally closing in on trustworthy reporting.

The lack of governance applied to this HR solution hindered the company in two primary ways. The first is that they were unable to generate trustworthy reports for months. This challenge made it impossible for them to meet the basic HR reporting requirements for a large enterprise. Additionally, being focused on tactical data cleanup and definitions also prevented this department from being able to use the data to drive the organization forward.

The firm now has clean definitions for many of its attributes and is developing training materials to help ensure that divisions across the enterprise are using the attributes and the application consistently. The business requirements can be used to update the application and execute ongoing quality reporting. Most importantly, the governance they’re using will enable the focus to shift towards improving HR rather than trying to generate standard reports.

To avoid the pitfall this HR department encountered, the following activities are a good start to upgrading data governance during the implementation without impacting the timeline or licensing another piece of software. While the following sections may seem overwhelming at first blush, these activities can be spread out across the entire implementation and even after go-live. The important part is that they get scheduled while data is on everyone’s mind.

Kick off Data Governance - If you don’t have an active data governance organization within your firm. Set some time aside towards the beginning of the implementation to discuss data governance, what it is, and how it can be used to improve everyone’s work. If you have active data governance, it’s a great opportunity to talk through the project with the governance team and project teams together. Outline the program's goals, how governance can help with implementation, and how business can continue after go-live. The important part of the discussion is to get people thinking about data governance.

Document the Gaps and Define the Best Practices - Identify current data governance strengths, weaknesses, gaps, and risks, and define future state data governance best practices. While working to identify the gaps and best practices, start to identify the key people who will be the driving force behind ongoing data governance. The exact roles vary from organization to organization, but some common roles are executive champion, data governance manager, data governance council, and data steward.

Define a Data Governance Charter - Similar to the program charter that is created as part of the Oracle implementation, create a data governance charter to keep everyone on the same page about how we’re going to make sure data is enabling the organization to meet its needs. The charter usually contains the following sections: problem statement, responsibilities, goals, benefits, scope, assumptions, dependencies, activities, deliverables, risks, and critical success factors.

Collect the Metadata - There will be many meetings discussing what is an active customer, what is a line of business, what is an item type, and how to classify an item for a given item type. Don’t lose that knowledge in the opacity of the system. Make sure the decisions are documented and create a library that contains the terms, requirements, and all the metadata that you can. This will speed up future development and the potential future implementation of governance software.

Build out the Organizational Framework - Formalize the resourcing decisions made while documenting the current gaps to create the data governance organizational framework. Identify each of the important roles that will make up the data governance council and office and their responsibilities.

Assemble a Data Governance Handbook - If your organization doesn’t already have a handbook that contains the organization’s policies, procedures, roles, and responsibilities for data governance, define what should be included in your organization’s data governance manual. This handbook is the go to authority for all things data governance. It can be quite difficult to create, but it doesn’t have to be done in one go. It is also a living document that is meant to evolve with the business over time. The handbook frequently contains the mission, guiding principles, organizational framework, roles, responsibilities, communication framework, metadata catalog, policies, standards, metrics, processes, tools, and resources. If there is something data governance related, it or a reference to it should be found within the handbook.

Create a Communication Matrix - Effective communication is critical for a successful data governance program. To clarify communication protocols, create the protocol for how to communicate data governance policies, procedures, issues, and updates. The one created by Robert Seiner, with his non-invasive data governance approach, is excellent. It is recommended to work on defining the communication matrix during the initial set-up of the data governance organization, as it provides a mechanism for defining a clear communication structure — heading off the game of telephone before it starts.

Define the Data Governance Scorecard - Define the metrics and mechanisms that will let you know if data governance is effective at the organization. These metrics align with General Accepted Information Principles (GAIP) and measure data infrastructure, security, quality, and financial goals. They can be about reducing the number of legacy databases, increasing employee skills, and making sure that the costs are in line with the committed return on investment.

Build out the roadmap - Chances are, at the time of go-live for the main implementation there will be many activities and sections that still need to be completed or updated within the governance handbook. Create a roadmap to keep improving and building data governance operations. This is a journey and not a project. Defining the next steps will continue to provide a vision for how the organization can better serve its constituents.

Establish data governance council meeting cadence - Getting the data governance council together is important to keep the data improvement momentum going. During the first meeting, show the group where the data has been, how far the organization has come, and where it is going. The format for the meeting depends on your organization's culture. The important pieces are that all data related initiatives are discussed, and issues are identified, discussed, and solved.

While these deliverables may seem like a lot, if they are spread across an implementation or at least planned to be executed at the tail end of the implementation, they are manageable. Additionally, you will be able to get more out of your new system and recognize benefits over the long term. We see that organizations that have even a minimal data governance operation at the time of go-live have the mechanisms in place to measure what’s important, communicate issues, and quickly come up with resolutions.

If you have questions about data, data governance, or data migration, let’s spend 30 minutes together to go through your questions and approach on your project.

3 Keys to Drive Your Payroll Validation Success

Digital transformations are a major investment in your organization’s future. However, the success of such an implementation can hinge on payroll validation. During this critical step, the project team has the important goal of ensuring that employee paychecks are being calculated correctly. By the time your new system is live, every single earning, deduction, and tax must be accurate.

Payroll testing is a time-consuming process—typically several weeks long. Therefore, if more issues are encountered than expected, the entire project can be delayed if these critical issues are not addressed promptly.

That said, payroll validation must be focused and efficient. We’ve identified 3 keys needed to accomplish an enterprise payroll validation:

Understand that Payroll issues cannot wait to be fixed.

Payroll validation is a critical-path item. Going live with incorrect payroll will lead to backlash and reduce confidence in leadership. This is exactly what happened to the City of Dallas in 2019 when they failed to capitalize on their payroll testing and went live with faulty configuration. As a result, first responders were not receiving their benefits, and they were not being paid properly. This culminated in the story making national news after the president of the Dallas Fire Fighters Association penned an open letter to city officials about the negative impact the issues had on crew morale. Fortunately, Definian was pulled in to resource the project and we secured the benefits that our first responders were entitled to. You can read more about this here.

This story should serve as a warning to all other organizations undergoing a similar transformation. Seldom are there issues that come up during payroll validation that can wait to be solved. Instead, while the project team is all hands-on deck for payroll testing, they should ensure that issues are resolved as soon as possible after discovery to prevent any incorrect paychecks being sent out after go-live.

Establish and enforce expectations across your project team

When validating payroll, having the right people is key. The team tasked with this job should have intimate familiarity with how payroll is currently run, how it will be run in the new system, and the importance of the job they are doing. This knowledge empowers them to more quickly resolve issues so that testing can continue on-schedule. Teammates across the project must also be highly organized so that as defects are discovered, they are quickly logged in a central location that is reviewed at least daily, with clearly defined action items, owners, and deadlines. Accepted tolerances should be documented, helping the team stay focused on a clear goal.

This applies not only to the client side, but vendors as well. Functional consultants, data migration experts, and integration consultants all must be aware of the process so that issues can be addressed effectively, regardless of the root cause. Without involvement from appropriate subject matter experts, a single issue can easily derail testing and, by extension, the entire project timeline.

Use insightful metrics to drive project direction

Due to the high-stakes nature of payroll validation, project leaders must feel confident and secure in their decision to move to the next steps of the project. To do this, they must have access to insightful metrics.

For example, imagine a scenario where legacy and target system payroll deductions, summated across all employees, are equal to the exact cent. Lurking under the surface, however, is a healthcare deduction that is severely in excess, and wage garnishments that are not being deducted at all. In this scenario, at a high level, things may appear fine, but the reality is far more severe when examining at a detailed level. Alternatively, there may be known issues that are affecting variances that are skewing metrics, or metrics for an individual division or agency. As a leader, it is important to ensure that the results are viewed holistically and through multiple lenses so that smart decisions can be made. Failing to do so can lead to false positives and unexpected issues after go-live.

Final thoughts

Keeping these points in mind will play a major part in the success of your digital transformation. While the process does come with risk, when done right, it helps ensure your organization has a seamless transition to your new system and maximizes the benefits reaped from it.

Remember that payroll is not merely data, it has a real impact on the day-to-day lives of your employees, and they rely on its accuracy. We all expect employees to be good stewards of organizational resources and time spent “on the clock.” Likewise, the project leadership need to be the best possible stewards of employee payroll.

Part of that stewardship means finding the right project partners. If you would like to learn more about best practices that we employ at Definian and see if our services fit your needs, contact us below.

IDMP Compliance: Business Priority, Governance Imperative

The ISO Identification of Medical Products (IDMP) standard is one of the most complex data challenges facing life sciences companies today. The regulation requires unique identification of pharmaceutical products using a standardized language to improve the exchange of data between pharmaceutical companies and global regulators.

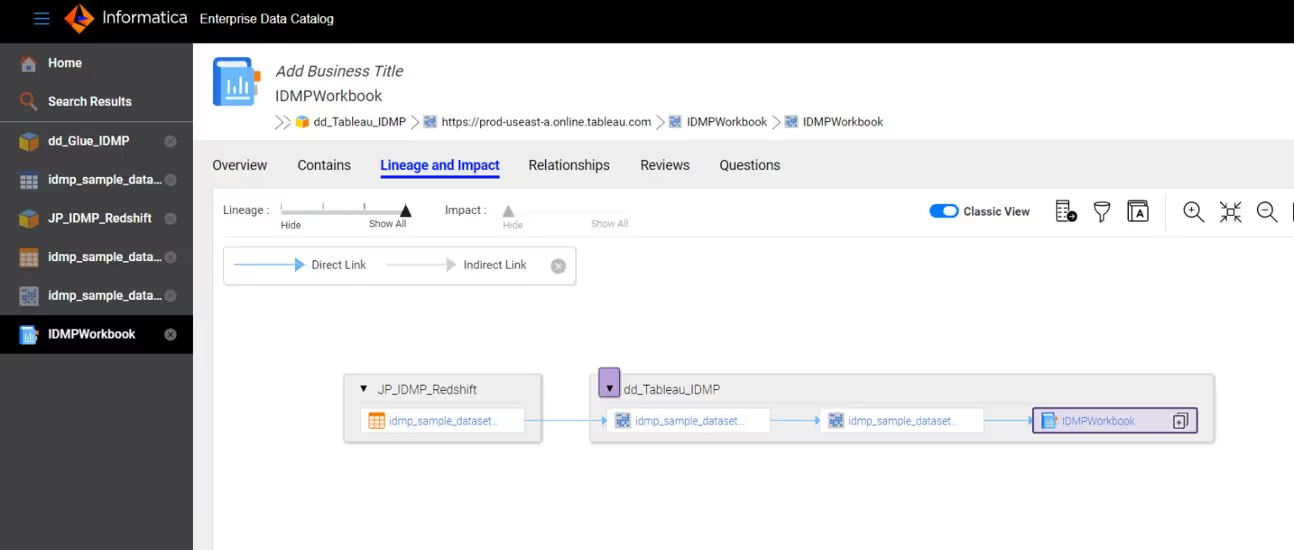

Meeting the full scope of the IDMP regulation (which includes five distinct standards) will require ongoing access to accurate, high-quality, and trustworthy data collected from multiple systems across your enterprise. Good data governance is critical. And it’s why we’ve partnered with Informatica to deliver a comprehensive solution to help you support better data stewardship, data quality, and data monitoring.

An End-to-End Data Governance Solution

Definian has designed a comprehensive data governance solution to address IDMP compliance from start to finish. We begin by establishing business glossaries that define terms so that data users across the enterprise share a common language. We then identify critical data elements from across your source systems and display the lineage, owners, and stewards of that data in a searchable data catalog. We also establish data quality rules and metrics to apply against your data so that you can easily monitor governed assets with 3 compliance dashboards. To simplify reporting with consistent data values, we harmonize your reference data according to IDMP rules. Our IDMP solution is based on Informatica Axon, Enterprise Data Catalog, Informatica Data Quality, Informatica Reference 360 and Informatica MDM (see Figure 1).

Definian has experience delivering data governance solutions that help organizations improve data management processes to meet stringent compliance regulations. We work across policy, data, and technical domains to help you achieve results faster by digging deep into data policies to deliver the strategic solutions your organization needs to transform regulatory compliance into a business differentiator.

Establish a Foundation for Innovation

As the regulatory landscape continues to grow more complex, life sciences organizations are seeking better ways to adapt and respond. By establishing a sustainable data governance framework now, you can meet new imperatives and priorities with clarity and intelligence, integrating high quality, traceable data into your organization’s ongoing compliance programs to better support its business strategy, mission, and goals.

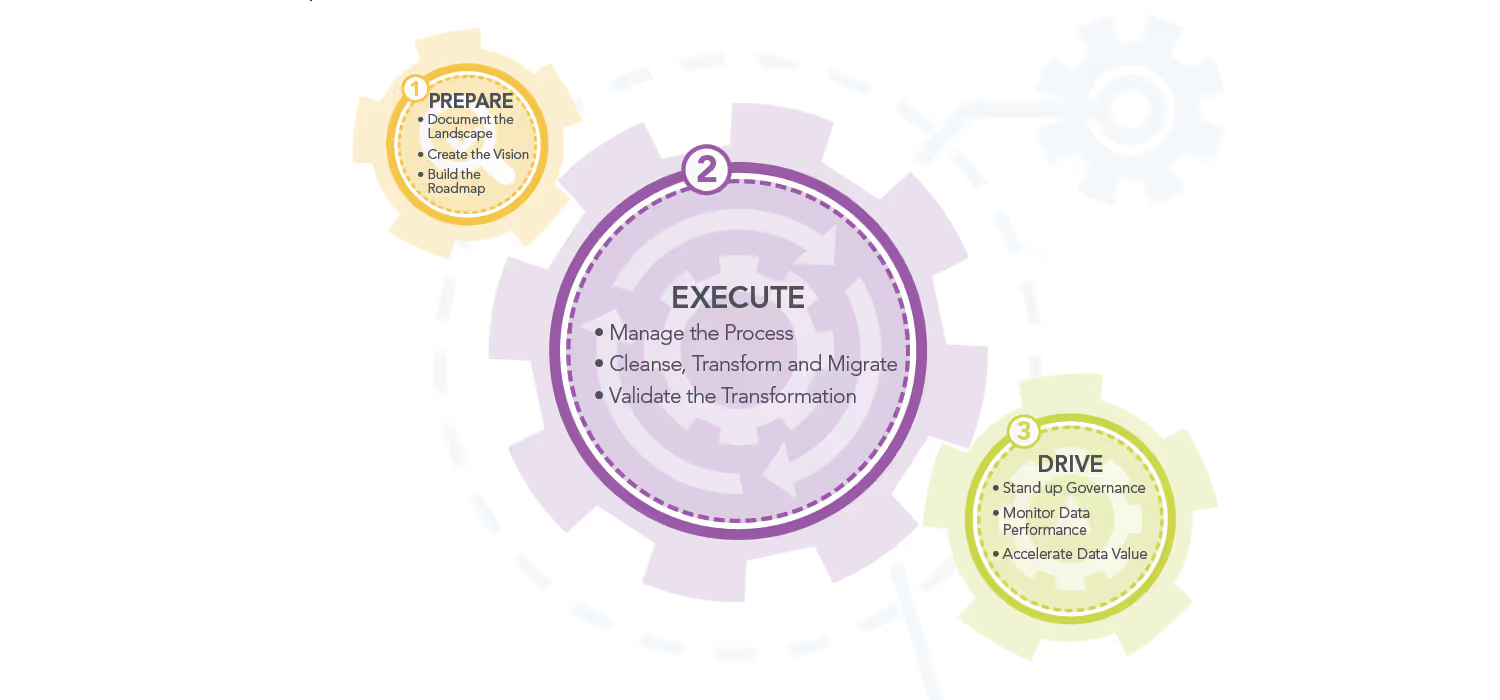

Best Practices Data Migration Services Explained

A comprehensive data migration approach covers the phases from preparing the target vision, executing the transformation, and driving long term data performance. These three phases enable a successful implementation through a combination of data governance, data management, and traditional data migration activities that align technical execution with business goals. Read the steps in the attached pdf to learn what's needed to maximize the effectiveness of your migration.

What is Data Monetization?

Data Governance programs have lacked business adoption because there is often a limited tie-in with financial benefits. Let’s face it, if the regulators are not breathing down your neck, it is hard to get senior management to continue to sponsor data governance.

We define Data Monetization as follows:

Data Monetization leverages data and information to expose opportunities to increase revenues, reduce costs and drive competitive advantage while mitigating risk.

Here are some use cases for Data Monetization:

- Grow Revenues – A retailer improves cross-sell and up-sell opportunities based on a single view of the household

- Reduce Costs – A manufacturer improves the quality of billing addresses to reduce Accounts Receivable and Working Capital

- Manage Risk – A bank reduces credit risk by establishing a unified view of counterparty risk across affiliates and subsidiaries

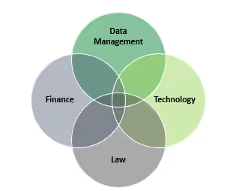

Data Monetization is a cross-functional discipline that draws from best practices in Data Management, Technology, Law and Finance.

Renovus Acquires Majority Stake in Premier International (now Definian)

CHICAGO, October 11, 2022 — Renovus Capital Partners (“Renovus”), a Philadelphia-area based investment firm, announced today that it has acquired a majority stake in Premier International Enterprises, LLC (“the Company”). Founded in 1985 and headquartered in Chicago, IL, Premier International is a technology services firm offering solutions that reduce the risk associated with complex data challenges through innovative technology and consulting services.Premier International leverages its proprietary software tool and team of business consultants, software developers, and subject matter experts to deliver its data-related services to the Company’s deep customer base of large enterprises.

Renovus’ investment comes during a period of exceptional growth at Premier International, which was recently named to the prestigious Inc. 5000 list of the nation’s fastest-growing private companies. With Renovus’ support, Premier International plans to use the investment to further improve its software product, pursue strategic acquisitions and enhance its service offerings to clients.

“Renovus brings significant experience investing in the technology services industry, and we are excited to leverage their knowledge to refine our strategy, scale our delivery resources, and expand our offerings both organically and inorganically,” said Craig Wood, CEO of Premier International. “We remain focused on accelerating the future for our clients by bringing transformative data solutions to them and we are confident Renovus and their investment will unleash the full potential of Premier International to support that goal.”

“As organizations scale and the market for big data grows, enterprises have been more and more focused on having clean, quality data in a centralized location,” said Atif Gilani, Founding Partner at Renovus. “Premier International is well-positioned to capitalize on these trends due to its differentiated data migration offerings coupled with new capabilities recently introduced, including master data management and data governance.”

As a platform investment for the firm, Renovus is actively identifying potential acquisitions that will help expand the current product and service offerings of Premier International. “With Premier International’s strong leadership team and clear vision for the future, we are excited to provide the necessary resources to accelerate the growth and success of the Company,” said Jane Buckley, Senior Associate at Renovus.

Ascent Advisory Partners served as financial advisor to Premier International. DLA Piper served as legal counsel to Renovus.

About Premier International (now Definian)

Premier International Enterprises, LLC is a Chicago-based technology consulting firm specializing in data migration. The company's innovative services and Applaud® software reduce the overall risk in a technology transformation and ensure projects remain on track in even the most complex environments. Founded in 1985 and with over three decades of successful execution, our solutions have a proven track record across a wide array of industries and applications. We have the knowledge and understanding to provide our clients with tailored solutions to address their specific data migration challenges. For more information, please visit Definian.com

About Renovus Capital Partners

Founded in 2010, Renovus Capital Partners is a lower middle-market private equity firm specializing in the Knowledge and Talent industries. From its base in the Philadelphia area, Renovus manages over $1 billion across its three sector focused funds and other strategies. The firm’s current portfolio includes over 25 U.S. based businesses specializing in education and training, healthcare services, technology services and professional services. Renovus typically partners with founder-led businesses, leveraging its experience within the industry and access to debt and equity capital to make operational improvements, recruit top talent, pursue add-on acquisitions and oversee strategic growth initiatives. More information can be found at www.renovuscapital.com.

5 Questions to Ask Before Starting Your Oracle Cloud Implementation

Can Oracle Cloud Fusion meet your business needs now?

Oracle Cloud Fusion has a wide range of modules and is constantly developing more, from HCM to Supply Chain Management, however there are certain smaller modules that are still in development or are not yet available. Make sure that the system as it is today can accommodate your business or you may end up needing additional software and integrations.

Who are your Subject Matter Experts (SMEs) and how much time can they dedicate to the project?

Start identifying support resources for them early. Ensure that your project timeline is designed to accommodate existing crunch times, like financial resources needing to deal with month end close. The more time that your subject matter experts can devote to the project, the more likely it is to be completed correctly and on time.

Where do your business processes involve more than one area?

Get those SMEs talking early and often. Ensure that any systems integrator you are using is aware of these complexities early so that they can help you design your solution correctly the first time. It is much easier to design a complex system from the start than to redesign it to incorporate surprises.

How can we get data right the first time?

Unlike on-premise solutions, once data is loaded into an Oracle Cloud pod, it cannot be deleted except in rare cases. Once data is loaded into production, it is there until you move to a new system. Plan for your teams to thoroughly review your converted data every test cycle so that by the time your new system goes live, everyone is confident that they have the necessary data to do their job.

What is the reporting solution for historical data?

Converting more historical data than you absolutely need will cost you time (increased cutover and possible performance issues in your pod) and money (more pod space and time spent cleaning up historical data).

What's the Difference Between Data Validation and Testing?

Data validation and testing are both crucial when migrating data to any system. It’s important to understand the difference as they are frequently mistaken to mean the same thing. If validation and testing are not performed thoroughly or not performed in conjunction with one another, the ensuing issues can easily derail an implementation.

What is Data Validation?

Data validation is the process of checking the accuracy, quality, and integrity of the data that was migrated into the target system. When performing data validation, it is important to understand not all of the data will look exactly as it did in the legacy system. The structure of the master data may have changed and values may also be different between the legacy and target system. Payment terms, for example, may be stored as ‘Net 30’ in the legacy system, but changing to ‘N30’ in the target system. Understanding how and what is changing is going to be important when performing data validation activities and the resources performing the validation should have that understanding ahead of trying to perform validation.

What is Testing?

Throughout the implementation process of a new system there ought to be multiple opportunities to perform testing in the target system with the migrated data during planned test cycles. Preparing for an implementation is an iterative process. Issues will be identified in each test cycle, whether it is with the system configuration or the migrated data. The various teams will then work together to resolve the issues identified in preparation for the next test cycle with the goal being much improvement from the previous. This does not mean, however, that there will not be any issues. Again, it is an iterative process and typically with the resolution of one issue, it can uncover additional issues that may have been hidden by the original issue. By the User Acceptance Testing (UAT) cycle, the major issues should have been identified and resolved with the goal being minimal issues and changes post UAT heading into the go live.

Testing is not data validation. Testing is much more involved than performing basic data validation. Since data can drive system functionality, it is important to perform data validation and testing in tandem. The data may look correct, but that does not mean the system is functioning as expected. Testing involves more advanced navigation of the target system along with executing typical business processes in the new system. The results of executing those processes should confirm if the system and functionality is working as expected and meets the requirements needed by the business. All areas of the business should execute end-to-end testing.

Validation and Testing for Success

After witnessing and being a part of many, many implementations, I can confidently say, a good indicator of a successful go live can be partially based on the amount of thorough data validation and testing that was performed throughout the project ahead of the go live. Ensuring your project has a comprehensive and robust data validation and testing plan will put the project on the path to success!

Migrating from IFS to Oracle Cloud for a Major US Defense Contractor

Background

In April of 2021 one of the largest providers of technology services and hardware for the United States government embarked on a mission to modernize their Supply Chain Planning solution. The goal of this modernization effort was to reduce costs, gain efficiencies, and drive innovation so that the company could better serve its governmental, defense, and intelligence clients. To make this implementation possible, Procurement, Inventory, and Order Management data would first need to be migrated from their legacy IFS system to Oracle Cloud. One priority of the migration project was to enrich data as it was extracted from IFS so that it could drive enhanced business capabilities and intelligence in Oracle Cloud.

Connecting Different Sources

One challenge that was identified early in the process was that while the Supply Chain Planning was moving to Oracle Cloud, the accounts payable and other financial data remained in a separate Oracle EBS ERP system. There were several impacts of this system design on the data conversion process.

During the migration process Definian needed to make sure that any purchase order that still had an open accounts payable amount would be migrated into Oracle Cloud. Using the Applaud tool, Definian was able to connect to Oracle EBS directly and confirm that all purchase orders with the open AP amount were converted.

On top of this, the Supplier data needed to exist in both ERP systems after the Oracle Cloud system went live. To ensure that the data stayed in sync between the two sources Definian leveraged their deduplication process to merge Suppliers that had already been merged in Oracle EBS during a prior migration. Definian also provided a detailed mapping showing how Supplier data had been mapped between IFS, Oracle Cloud and Oracle EBS.

Validating Manual Data Collection

During the project design it was identified that a significant, several month effort would need to be undertaken to collect additional data that was not present in the legacy system, but was needed for business operations in Oracle Cloud. The project team would need to reach out to hundreds of individuals across dozens of teams asking for additional data points.

Definian was able to mitigate the risk that comes with data collection by building out a complex migration solution to apply these user-provided values to the correct Oracle Cloud attributes. Further, Definian leveraged our thorough data pre-validation process to ensure that the attributes that were required for each purchase order record were provided and that it fell within the list of values that were available for use. Definian went as far as validating the values provided for custom Oracle Cloud DFFs to ensure that they would be accepted by the system requirements.

Creating Note Attachments

In Oracle Cloud, it was determined that there wasn’t going to be enough space on each purchase order to capture the note data that needed to be preserved from the legacy system. The solution was to attach the legacy note details to each purchase order record as a downloadable Excel file. The challenge is that those Excel files didn’t exist prior to the conversion.

Definian was able to rectify this by building out a complex process to scan the legacy database for note details and build out the Excel attachment files. From there Definian leveraged their SDO Attachment utility to link each Excel file to the correct purchase order record. In total, nearly 25,000 attachment files were uploaded to Oracle Cloud and attached to the purchase orders at 100% success rate.

Set Up for Success

In the end the client was able to load 100% of the converted data objects and ensure that their Supply Chain Planning solution was set up for success for the years to come. While there were plenty of challenges along the way, the team was able to accomplish the original goal in just about one year. The legacy data had also been standardized and enriched in a way to allow for more efficient operations and more reporting capabilities in the future.

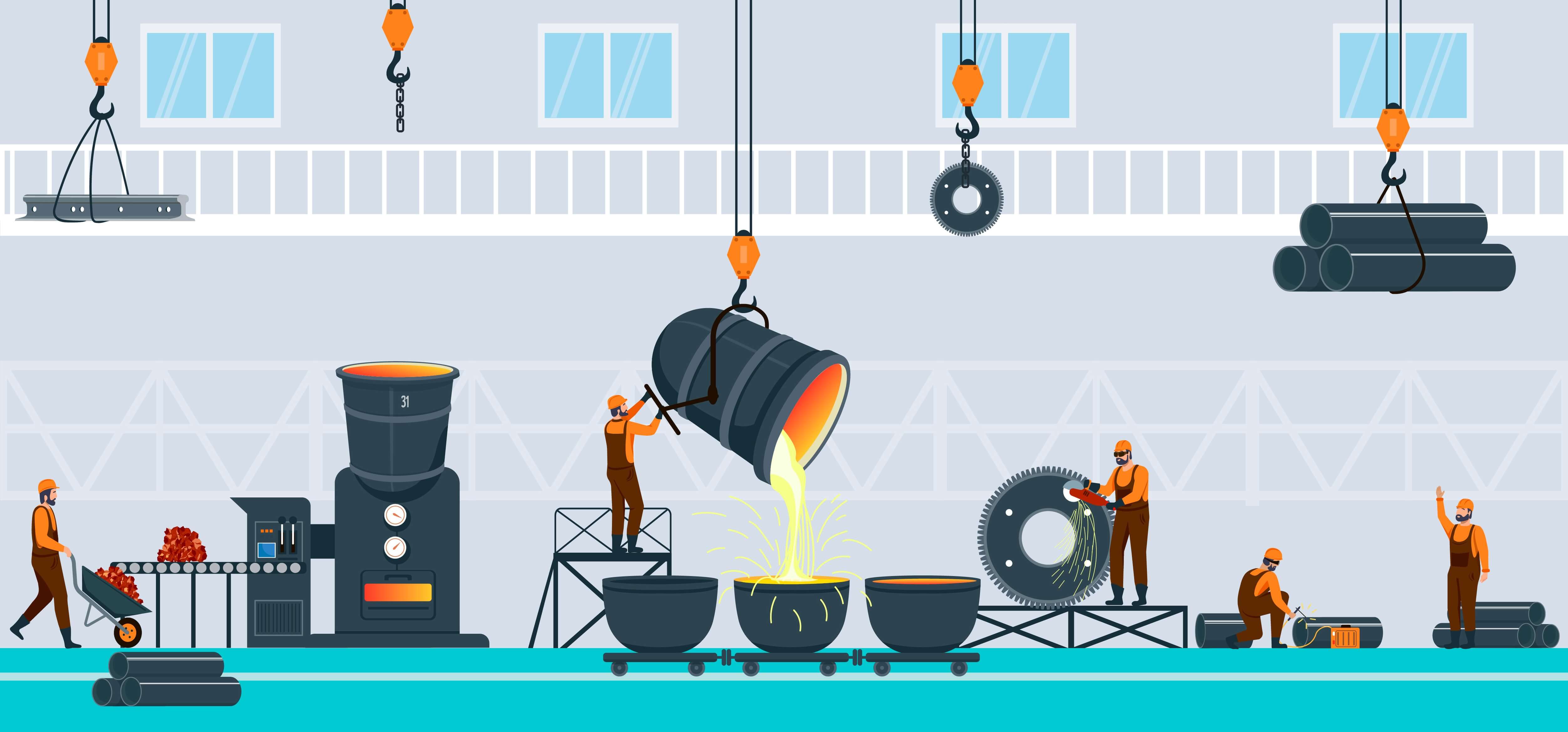

Migrating Asset Management Data to Microsoft Dynamics 365

What is Asset Management Data and Why is it Critical?

Enterprise Asset Management (EAM) involves the maintenance of an organization’s assets. EAM data includes information on physical assets, their locations, maintenance activities, and items needed to support maintenance, repair, and operations (also known as MRO).

Accurate EAM data is critical is to make sure assets for the business are recorded correctly. Among other things, high quality data ensures that the business can purchase the right goods to maintain and repair equipment and operations throughout production. It is important to manage the master level data and the data that falls under it so that the accessibility of a specific asset can be easy to search and to proceed with the necessary precautions for their business to thrive.

Choosing Dynamics 365 for Asset Management

This steel manufacturer chose Dynamics 365 as their Asset Management solution so that they could better support and operate their business in a tech-savvy and flexible environment. Innovations in process automation available in Dynamics 365 will accelerate business activities and increase efficiency of maintenance activities. Dashboards makes it possible for the buisness to discover patterns and trends relating to asset management and drives insights that ultimately help the business minimize costs, maximize profits, and grow the business.

Reverse Engineering the EAM Solution

Due to complexities in the legacy data and stringent requirements in Dynamics 365, the System Integrator for the project suggested a manual approach for entering EAM data. The manual approach would require multiple rounds of time-consuming data entry for the business. Worse still, the data entry activity would land on the critical path of the project and detract from other critical activities. Fortunately, this steel company has Definian as their data migration partner, and we knew there was a better way.

Initial EAM data loads into Dynamics 365 was a challenge. The business and the system integrator needed Definian’s supports to connect the dots between legacy data structures and the target formats in Dynamics. After running into load errors and discovering missing configuration, the Definian team decided to reverse engineer data structures in Dynamics 365 and build the conversions based on this analysis. This approach included manual entry of test data into Dynamics 365, exporting back out the test data, and trial and error adjustments throughout the process. The Definian team first went into a test load environment and exported over 120 data entities related to Asset Management. After this exhaustive process, we analyzed and reviewed every exported data file to uncover connections to in-scope fields. This enabled us to find missing data entities and data configurations that needed to be loaded to minimize load errors and issues. We identified 15 entities and configurations that helped to avoid the load issues we initially encountered. We shared our findings with the system integrator and closed remaining gaps through default values approved by the business.

Leveraging Unconventional Data Sources

The business was able to provide us spreadsheets outlining an Asset Hierarchy for each specific site. Data included main locations, main assets, child assets, instruction list for specific activities to be conducted, and more. We built logic into the import application to analyze the asset hierarchy and greatly reduce the amount of time the client needed to spend on the spreadsheet. This exercise helped set the foundation of for how this Asset Hierarchy would be displayed. The initial design would have been a challenge, very time consuming, and ultimately would have delayed tests and validations. Our agile handling of this new data source minimized rework for the client. Because of the time we saved, we strategically expanded scope to include MRO Product Items and Asset BOM (Bill of Materials) data entities to better serve growing client needs.

Surprise Challenges

Surprise challenges emerged while loading EAM into Dynamics 365. While loading Functional Locations and Assets, there was an issue where the unique assets were duplicating in the Asset View page. Although the correct asset appeared with its unique Maintenance Asset ID, duplicate child data appeared with no information besides the Asset Name. Thanks to the speed at which we could load and validate data, this was quickly identified and resolved.

To minimize further unwanted surprises, validation steps were incorporated into the conversion process to ensure that spreadsheets inputs would not cause errors during the creation of the data extracts. Some of these validation checks included making sure a child asset level was not skipped, and that the asset hierarchy was logically consistent. Other validations checked against Dynamics 365 configuration, uniqueness constraints, and associations with related modules.

Success Today and for Years to Come

Definian's data migration approach made it possible to go live with high quality data. Since go live, the client is now able to use the full suite of Microsoft Dynamics's features, including many that will reduce maintenance costs and preemptively solve production problems. In addition, the facility now has a reliable source of truth for Enterprise Asset Management data, compared to the problematic data contained in their outdated previous system. The new cloud solution will serve the business for many years to come.

Improving Regulatory Compliance and the Customer Experience through Advisory and Technology Consulting Services

Client: Multi-National Bank with Regulatory Compliance and Data Quality Issues

Background

A growing multinational bank found itself confronted with rising regulatory compliance demands, data security concerns, and issues regarding data quality. The bank’s data management operations were reactive, fragmented, and struggled to cope with the escalating challenges stemming from the organization's growth and regulatory remediation mandates. Definian was engaged to restructure data operations to be in line with the business objectives and to resolve issues related to data availability, compliance, quality, aggregation, and overall operational inefficiencies.

Project Challenges

- A lack of a formalized data quality issue remediation process had drawn the concern of regulators and was negatively impacting financial and leadership reporting

- Limited resources prevented the backlog of data issues from being addressed.

- The inability to accurately unify customer account and product data from multiple sources into a single view dramatically prevented profit aggregation reporting targeted marketing and risk monitoring effectiveness

- Unstructured and competing data ownership, management, and governance led to inconsistent decision making

Key Solutions Summary

- Definian’s Data Governance-in-a-Box approach met the client where they were to create a roadmap aligned with business objectives and cultural readiness

- Designed and implemented comprehensive data governance program, organizational framework, and operating model

- Definian’s Data Companion solution clarified critical data elements definitions, captured metadata and management requirements, cataloged data quality rules, and established a hub for data operations issue management

- Data stewardship-as-service resolved the backlog of data issues and operationalized the Data Governance program

- Designed and facilitated educational curriculum on data governance best practices to accelerate and improve adoption and support regulatory requirements

Outcomes

- Operationalized Enterprise data governance and data management organization

- Identified underlying technical and business logic issues leading to mistrust in customer hierarchy and account ownership

- Recommended steps to resolve and enhance customer and product views without significant investment in new software products

- Improved compliance, financial, and leadership reporting

- Catalog of critical data element definitions, metadata requirements, governance processes

- A data issue resolution plan that methodically reduced the backlog with the available resources

- Increased cultural awareness on the impact of data to the organization

Easing Data Conversion Woes for an Early Education Client

Project Summary

This early education client wished to upgrade their data architecture from PeopleSoft to Oracle Cloud for enhanced functionality and reporting capabilities. As one of the largest privately-owned child care providers in the US, they wished to bring 10 distinct brands and hundreds of schools to their existing Oracle Cloud platform. Definian was enlisted to perform data migration for this Oracle Cloud Financials and Procurement implementation in 2019. Our project team included the clients’ functional and technical leads, system integrators from a partner consulting firm, and our own project managers and developers. After months of development and test cycles, the project was put on pause in the spring of 2020 due to the COVID-19 pandemic. Definian resumed project activities in September 2021 to iterate on existing data conversions and support the production loads for a February 2022 go-live, completing six conversion cycles in six months to ensure the utmost data quality in the new system.

Project Requirements

The client’s go-live comprised of the following Oracle Cloud data objects: Suppliers, Supplier Banks, Purchase Orders, General Ledger, and Fixed Assets. Source data came from PeopleSoft and Excel reports from business users. To minimize the impact on school operations, the Cutover schedule was incredibly compact. The goal was to load historical General Ledger, Suppliers, Supplier Banks, and Purchase Orders before go-live and load Fixed Assets and the most recent GL period after go-live. So as not to disrupt the pre-go-live processes in Peoplesoft, two separate data snapshots were necessary before go-live and a third was needed for the post-go-live conversions. All data objects were to be loaded into the already live client HCM Oracle Cloud environment.

Key Activities at Cutover

We instilled confidence our process by using our repeatedly enhanced data conversions for our final Cutover loads. At the time of Cutover, we provided 42 distinct files to support the in-scope data objects. We converted over 4 million records, performed pre-load validations using our Critical-to-Quality checks, aided in client validations, and provided load files to the technical leads from the system integrator. To adapt to this client’s high data volume, we generated and provided Excel validation extracts to the client leads as well as Excel extracts and load files to the system integrator instead of the typical Oracle FBDIs (File Based Data Imports). All data provided at Cutover was successfully loaded into the client’s Oracle Cloud system.

Project Risks

Given the project restart, we faced unique and unanticipated challenges. However, we used our agile approach to adapt to the circumstances and support our client in this unprecedented time.

COVID-19-Induced Challenges

The client and System Integrator (SI) both had notable resource changes after the project pause. We worked with client functional leads who did not have insight into the original data mapping conversations nor the functionality of the target system.

We adapted to these challenges by iterating on previous versions of Mapping Specification and discussing logic changes with the new client and SI leads. We modified preexisting conversion code and used our Oracle Cloud knowledge to provide solutions. We ensured that their organizational needs were met through repeated validations and transparent communication.

Client Technology Limitations

Large client data volumes caused server slowdown; conversion processes took substantially longer to run during the first cycle after the project pause.

Definian consultants tuned and optimized our data conversions programs to overcome limitations of the client server. Definian also consulted with our internal software team to troubleshoot issues in real time. We were able to get faster conversion processing times than ever before and delivered data extracts to the client leads with ease.

Data Transformation/Requirements Changes

The Supplier client leads wanted Supplier addresses standardized in a manner that did not align with the best practices address standardization approach.

We worked with the client leads to discuss their desired end state and implemented additional transformation logic to guarantee that their needs would be met. We tested this logic and ran our Critical-to-Quality (CTQ) pre-load validation checks to ensure that all processed records would load successfully into the client’s Oracle environment.

Client leads requested substantial requirements changes on the Purchase Orders data conversion during Cutover.

We typically do not recommend logic changes at Cutover given increased risk with the load to Production. However, based on the client’s needs and understanding of the risk, a consensus was achieved to proceed with the change order. Definian consultants worked with client leads to understand all aspects of their requests and robustly tested the newly coded logic. Client leads thoroughly validated the data extracts with all requirements changes encompassed. The desired changes were reflected and the data loaded fully into the Production environment, saving the client from making substantial manual changes to post-load data.

We found extensive duplication of supplier data within the legacy system. Additionally, the supplier format and structure required for Oracle Cloud was substantially different than of the client’s existing system.

Applaud’s powerful and flexible data matching engine was used to de-duplicate the client’s supplier data. Definian provided ample reporting tools to the client for them to determine which records should persist in their new Oracle solution. Across multiple test cycles, we gathered, implemented, and tested complex transformation logic that required cross-functional input and validations. Our custom error log reflected the coded changes and any cases that required further client investigation. We received some substantial structural Supplier changes during SIT2 and UAT, but adapted accordingly to meet the client’s data expectations.

Challenges Faced During Data Loads

Oracle Cross-Validation Checks (CVRs) were turned on for Purchase Orders (PO) during UAT without our knowledge. We loaded POs in the Production instance with CVRs on as well.

We dealt with significant PO fallout during UAT due to the CVRs being left on for the loads. We took a multi-step approach to mitigate the situation. First, we created a delta process to capture the POs that fell out in a prior load so we could try to re-load them again during the same mock cycle. The Oracle CVRs sometimes over-exclude records, so this process helped us to iteratively attempt to load previous fallout to eventually arrive at just the ‘problem’ records. Next, we conducted a second UAT test cycle to re-load POs with additional account transformation logic captured in the PO conversion. The objective of this approach was to mimic the back-end transformations that would be happening in Oracle so that validators could easily identify improper accounting combinations. These combined strategies, coupled with ample pre-load validation, helped us achieve 100% PO load success at Cutover.

The client’s Oracle HCM Cloud system was already live; all Procurement and Financial data was to be loaded/merged into this Production environment.

We needed to ensure that all newly converted data would be compatible with the already live HCM Production data. Our previous test cycles loaded data into a mock environment, so the Cutover loads were unique in this manner. We found inconsistencies with certain Location and Department configurations in Production that did not align with our repeatedly tested logic. Given our suite of Critical-to-Quality checks, we were able to quickly identify issues and subsequently notify the client team. We worked alongside the client team to guarantee that all affected Locations and Departments were updated accordingly. All issues were resolved prior to the formal data loads, paving the way for Cutover success.

Given the large data volumes of this client, the System Integrator requested that we provide data for loads using flat text files instead of the typical FBDIs (File-Based Data Imports).

While this may seem like a slight and nuanced change, the data delivery process was substantially impacted by this request. Usually, the clients validate the data in the FBDI, provide sign-off, and that same file is used for the SI load resource to perform the associated data load. In this case, we took a multi-step approach to ensure that all functional and technical parties would have their needs met. First, we provided an enhanced Excel extract to the client with additional legacy information included for easier validation. Once the client approved that data, we would exclude any records that were flagged by our Critical-to-Quality Checks and output that data in two formats for the System Integrator: 1) an Excel file that mimicked the format of the FBDI and 2) a CSV with the same data for load purposes. Several data conversions required multiple sets of files be generated; we managed the data delivery with ease. Specifically regarding the Fixed Assets conversion, we provided data in chunks of 100,000 records or less for an effective and efficient load into Oracle.

Project Timeline

After the project pause, the client schedule was extremely compact (6 test cycles, including Cutover, occurred in 6 months).

We understood the needs of the client and the pressure to meet their ambitious go-live date. We leveraged our many years of experience performing data migration to reduce risk along the way. Definian worked closely with the client and the System Integrator to create test cycle schedules and set expectations for data snapshots, data conversion turnaround time, and data validation. Definian also went above and beyond to work with individual client leads to instill confidence in the converted data.

We were faced with incredibly tight timing around Cutover, particularly for Purchase Orders.

We worked relentlessly during Cutover to determine that all necessary steps were taken for our client’s success. We completed the entire Cutover PO data conversion cycle (from raw data to pre-load validation) in 24 hours. This timeline was substantially faster than other test cycles. To meet this timeline, we planned and conducted numerous test runs off of an earlier data snapshot as practice for the final Cutover PO conversion. This robust validation using the prior data snapshot allowed us to expedite the final Cutover PO sign-off. Definian worked around the clock with client vendors and leads to ensure that all of our bases were covered during this critical time.

The Results

Our client went live on-time and with 100% load success for all data objects. Shortly after the go-live, the client team rolled out the Oracle Procurement and Financials system across their entire organization. The client business users had confidence in the converted Oracle data and few manual updated were needed. In addition, our expeditious turnaround at Cutover allowed operating schools to function as per usual, school facilitators were able to order food and supplies with minimal interruption. Child care has become more important than ever given the COVID-19 pandemic; we are glad to know that our client is able to operate effectively to serve working parents and teach the leaders of tomorrow.